OSSTIP/WP5

WP5- Workflow for conversion of GIS data into PT network schedule

This is a Work Package as part of the OSSTIP project.

Inputs:

- Initial GIS data (OSSTIP/WP1),

- GIS data infrastructure (OSSTIP/WP3),

- and an updated GIS network of changed PT service lines and frequencies (could be synthetic, or real data from BZEs project work so far etc).

Outputs:

- software scripts or packages supporting conversion of data formats,

- documentation on how to use them.

Estimated Time: Medium

Status: Significant progress (2014-02-07)

Notes on extensions: Note:- in April 2014, we agreed with BZE to upgrade this conversion and creation workflow to allow for time-dependent schedules, that reflect different vehicle speeds in peak and off-peak periods. See OSSTIP/WPBZE4 for notes on that work. Documentation about the scripts will be updated to reflect this change.

Requirements Summary

[edit | edit source]There are two key challenges with utilising software tools that visualise a public transport timetable:

- The decision-making involved in generating the actual timetable itself;

- The capacity to save the timetable in GTFS format.

The goal in this work package is produce software scripts and/or tools to support this process to be as efficient as possible. This may involve either writing new scripts, or leveraging existing open source applications, such as TransitDataFeeder.

The envisaged starting point is a set of transport routes saved in a common GIS format, such as ESRI Shapefiles, as developed in OSSTIP/WP1. These would be accompanied by a "desired frequency" and "average speed" of each route – probably to be recorded as part of the GIS database.

From this expected starting point, the scripts tools in this WP are envisaged to (a) create a set of "first cut" GTFS timetable files based on the above inputs; and (b) provide some kind of support/process for identifying where the generated timetable most needs to be further edited manually. This process should also be documented, so it can be reproduced as efficiently as possible throughout later stages of the project.

Results

[edit | edit source]20/11/2013 - intermediate work on relevant scripts

[edit | edit source]This is in progress, but we have designed a workflow from Geometric routes and stops, through to NEM Files (Needed by BZE for routing using Netview) and also GTFS.

Currently work organised via 2 repos on Git:-

- https://github.com/hsenot/nemtools/ (Handles geometric aspects via PostGIS database queries, and NEM files output)

- https://github.com/PatSunter/SimpleGTFSCreator - Creates a GTFS schedule from a set of intermediate route segment files, stops, and specification of timetable frequency.

7/2/2014 - working process to create GTFS timetable using scripts, spatial databases and QGIS commands

[edit | edit source]Current Process

[edit | edit source]We now have a working process to convert a set of shapefiles, and auxiliary descriptive information, into a GTFS timetable. It is still 'rough' in sections and could be further improved though.

It involved creating an 'intermediate format' describing route segments, stops, and frequencies along each stop.

The process is roughly as follows.

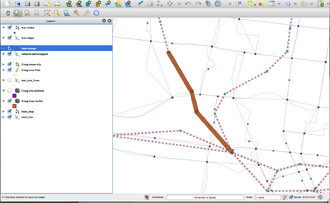

Initial inputs (see sample at right):-

- A Shapefile layer (vector) describing the routes for the network, that you wish to transform into a GTFS timetable.

- A Shapefile layer (vector) containing the stops along each of these routes.

- (NB - we later created a script to auto-add stops at a given distance along the route. Need to document here).

- Currently, we also need some auxiliary info as variables in a Python script:

- Speeds of each transport mode (later, we want to update ability to be able to create this per-route, and even per-segment)

- A specification of service frequencies throughout the day.

Then once this input is created, the conversion steps are roughly as follows:

- Run a series of Python scripts (See #Software and Data Links), and GIS commands using the QGIS tool, to convert these into an 'intermediate GIS format' we have developed that lists each route 'segment' between stops. You also need to run a script to create a mapping.CSV file between "segments", and "routes".

- Fill out a basic Python configuration file specifying some key high-level details of the schedule – the speed of each transport mode in your new routes, and the frequency of service throughout the day (currently all kept in the script itself.

- Run a second Python script that transforms the Intermediate format and configuration instructions in the above sections – the output of which will be a set of GTFS schedule files. (for a large network, this step takes about 1-2 hours on a laptop computer).

Then once you've created the GTFS file, it is good to load the file into a suitable viewer such as the Google Transit Data Feed ScheduleViewer, as per screenshots below.

The GTFS can then also be tested in OpenTripPlanner once you build a new graph using this new file, and start a server - e.g. checking that new routed journeys make sense. See OSSTIP/Setup of OpenTripPlanner to display Melbourne PT network.

-

Testing a single bus route, just converted via the SimpleGTFSCreator conversion scripts.

-

Checking a bus route that is part of the BZE revised bus network plan in OSSTIP/WPBZE1.

Samples of headway definition

[edit | edit source]This is a headway definition over a day, so that there is 5 minutes frequency in peak hour, reducing to 10 minutes inter-peak, and 20 minutes late night and early morning.

default_service_headways = [ (time(05,00), time(07,30), 20), (time(07,30), time(10,00), 5), (time(10,00), time(16,00), 10), (time(16,00), time(18,30), 5), (time(18,30), time(23,00), 10), (time(23,00), time(02,00), 20) ]

Further Notes / Possible Extensions

[edit | edit source]Possible improvements

[edit | edit source]Done

[edit | edit source]- Variable speed per route (?).

- Yes, this capability is available as per the extension below.

- And could we create a script to modify the shapefiles so the speed on each segment is proportional to distance from the city, as L did for the Netview work?

- Update 2014-11-05:- this extension was done as part of OSSTIP/WPBZE4. And as of Oct 2014 there is now a SegmentSpeedModel class abstraction to allow more complex speeds per-route and per-time-period, to allow more closely matching an actual GTFS timetable.

- Could we further automate and standardise the spatial database creation steps. And re-factor the scripts so they don't require superuser access (via sudo) to the machine you're running them on.

- Update 2014-11-05:- A lot of improvement in the SimpleGTFSCreator Github package since this comment, the tool now can work entirely by running Python scripts that don't rely on Nemtools, and don't need superuser access for any steps. However we wounldn't rule out using some spatial databases in a future refactor, as this might be simpler to use for some operations and thus be worth re-adding a database dependency.

- Per-route differing headway support.

- Update 2014-11-05:- This capability has been added as part of OSSTIP/WP6.

Still to do

[edit | edit source]- It would be good to possibly further refactor the nemtools and SimpleGTFSCreator scripts to better organise. Possibly to put them under a common special 'user' on GitHub.

Software and Data Links

[edit | edit source]- https://github.com/hsenot/nemtools/ Handles geometric aspects via PostGIS database queries, and NEM files output

- https://github.com/PatSunter/SimpleGTFSCreator - Creates a GTFS schedule from a set of intermediate route segment files, stops, and specification of timetable frequency.

| Authors | Patrick Sunter |

|---|---|

| License | CC-BY-SA-3.0 |

| Organizations | Beyond Zero Emissions, University of Melbourne, Public Transport Users Association |

| Cite as | PatSunter (2013–2025). "OSSTIP/WP5". Appropedia. Retrieved June 4, 2026. |