CrashSavers Trauma/Refining Our Course

| Part of | CrashSavers Trauma |

|---|

Find more about how the first prototypes where built and CrashSavers internal validation.

Prototype Testing

[edit | edit source]Our VR app underwent iterative prototype testing, first with the app developers, followed by an introduction to early users to gather feedback. Immediate feedback reported the app had “incredible graphics”, was “intuitive to navigate”, and that videos were “easy to understand”. The physical simulation model underwent a number of evaluations and prototype testing phases. Our team initially created a simulator that incorporated a pressure sensor as a way to provide nuanced biophysical feedback to users about the amount of pressure required to stop blood flow through the model. This simulator required an Arduino to be installed and required a longer total build time to complete the model.

There were initial concerns raised by firefighters in early user testing (August 2021) and GSTC judges about the reproducibility and ease of use of our model. We addressed this by consulting our engineers and working together to create a simplified version of the simulator. This model does not require any electronics, is cheaper at a cost of $55 compared to $90, and can be built by users in 1.5 hours compared to 20 hours, while still serving as a high-fidelity option for learners. In October 2021, we tested building the simple version of the simulator model with Guatemalan firefighters. They successfully built the model using the instruction manual with the entire process taking 45-90 mins.

For additional pictures of the evolution of the simple and advanced versions through prototyping iterations, visit here.

Internal Validation

[edit | edit source]Our VR app and simulator underwent internal validation via 3 different methods: 1) subjective validation of the physical model, 2) construct validation of the physical model, and 3) assessment of skill transfer from the VR app to the physical model. We tested 2 groups: 1) non-experts, who were medical students without experience in tourniquet application or trauma management, and 2) experts, who were physicians with experience in tourniquet application.

- Non-experts trying the VR application and DIY Tourniquet Simulator for validation

- Experts using our simulator for validation

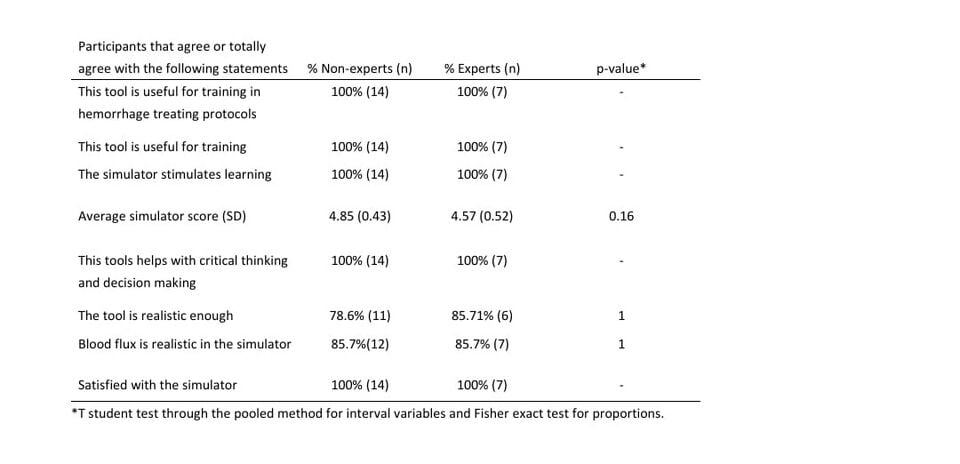

For subjective validation, non-expert and expert subjects placed tourniquets on the simulator, and later completed an 8-question survey on the simulator’s realism and quality, using a 5-point Likert scale. Results of the subjective evaluation are shown below (Table 1). We found no differences between non-experts and experts in the subjective evaluation of the module.

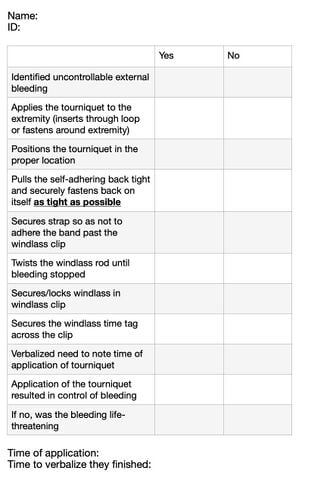

Objective validation was performed through a construct validation test where non-expert and expert subjects’ performance with tourniquet application was evaluated using a checklist to determine differences in skill between the two groups (Table 2). This checklist tool is adapted from Weiman (2019) to evaluate tourniquet application skills. Ten non-expert and 10 expert participants applied the tourniquet prior to completing the VR app modules. Table 3 reveals that experts place the tourniquet faster than non-experts at baseline, implying that the simulator is able to reveal differences in ability between non-experts and experts. We postulate that a larger test cohort will demonstrate differences in all items in the checklist.

Skills transfer validation was carried out by evaluating non-experts prior to training, compared to non-experts who completed case scenarios in the VR app and simulator training, using the same checklist tool. Table 4 demonstrates early results between non-experts before and after training. We also evaluated performances between non-experts and experts who both completed training (Table 5), and we found no differences between the groups. This differs from our prior comparison between the 2 groups without training that showed a significant difference in time to appropriate tourniquet application between novices and experts (Table 3).

References

[edit | edit source]Weiman, S (2019). Retention of Tourniquet Application Skills Following Participation in a Bleeding Control Course, Journal of Emergency Nursing, Volume 46, Issue 2, 2020,Pages 154-162 (fig 1)

| Authors | CrashSavers |

|---|---|

| License | CC-BY-SA-4.0 |

| Organizations | Global Surgical Training Challenge |

| Cite as | CrashSavers (2021–2025). "CrashSavers Trauma/Refining Our Course". Appropedia. Retrieved June 4, 2026. |