Cardiac Surgical Skills Training Module/Phase 4: Self-Assessment

| Part of | Cardiac Surgical Skills Training Module |

|---|

A key element to any surgical simulator is its ability to provide specific feedback to the trainee on how she is performing the procedural training in relation to how it should be done by high clinical standards, and what the trainee needs to change in order to improve performance. Registration of the learning progress is also needed.

The SELF-training box simulator has a feedback system based on sensor technology connected to the specially developed organlike/tissue mimicking model material. There are three levels of psycho-motor feedback that has been achieved:

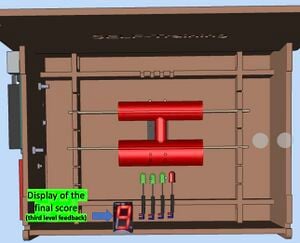

1) The sensor detects the traction that the trainee is imposing to the physical vascular model directly and in real time. This force is translated into a clinical expert calibrated color coded LED lights graded feedback system that peaks in red color when hazardous force is applied to the model. This is the first level feedback [Figure 1] function of the simulator, giving the trainee direct real time feedback while doing the critical parts of the operation.

2) The same sensor also provides continuous digital data output, coded as digits 0-9 in the digit display that provide a more detailed feedback in real time. These digits are registered and continuously stored by the camera in the mobile phone and stored. Thus the second level feedback [Figure 1] is a statistical representation of the whole training session with time spent and sensor output as the main factors.

Figure 1: First and Second Level Feedback

3) The third level of feedback [Figure 2] is provided when the training is completed, and the training box will calculate a final score of the performance based on the internal algorithm that can be pre-programmed. The final score of the 0 to 9 will give quantative feedback on the overall performance the trainee. This will allow the trainees to directly compare their performance on each pratice and record the progress of the psychomotor feedback.

Figure 2: Third Level Feedback

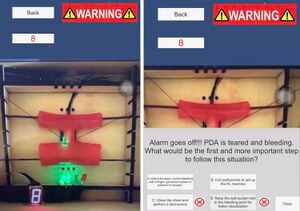

In addition, in training the response to any emergency situation, an AI enabled alarm system has been integrated in the app. As the phone camera keep monitoring the sensor signal of the distance, the app is able to detect a potential serious mistake during the surgery. One example is demonstrated in the system whereby the distance and forces are continously over a certain threhold, hence resulting in the rupture of PDA. This serious situation can be picked up the App, and a warning message will pop out when a few action options will be displayed in the App. The trainee will learn to deal with the emergency situation using the App.

Figure 3: AI warning of an emergency situation (LEFT) and action required (RIGHT)

In a future development, we foresee the use of image recognition and deep learning in order to inform the trainee about her deviations from expected hand and instrument movements and usage within the surgical field. Further, a deep learning based AI system will be trained based on expert performance sensor data as ground truth harvested during the testing and validation of the simulator. With this system, the trainee can get automated comparison to expert performance both in real time and as post-training statistics with specific advice on what parts of the procedure needs improvement.

Summarized, the feedback is comprehensive, sensor and image based, real-time with summary functions for track of progress. Based on representative expert performance data, cutoff scores will be provided to indicate when the trainee with sufficient stability performs on expert level, being ready to perform the procedure on patients.

| Authors | Self-training |

|---|---|

| License | CC-BY-SA-4.0 |

| Organizations | Global Surgical Training Challenge |

| Cite as | Self-training (2021–2025). "Cardiac Surgical Skills Training Module/Phase 4: Self-Assessment". Appropedia. Retrieved June 4, 2026. |